"The best architecture is the simplest one that meets your requirements. Complexity is the enemy of reliability, but underengineering is the enemy of scalability. Know where you are on the spectrum."

A Pragmatic AI Architect

Introduction

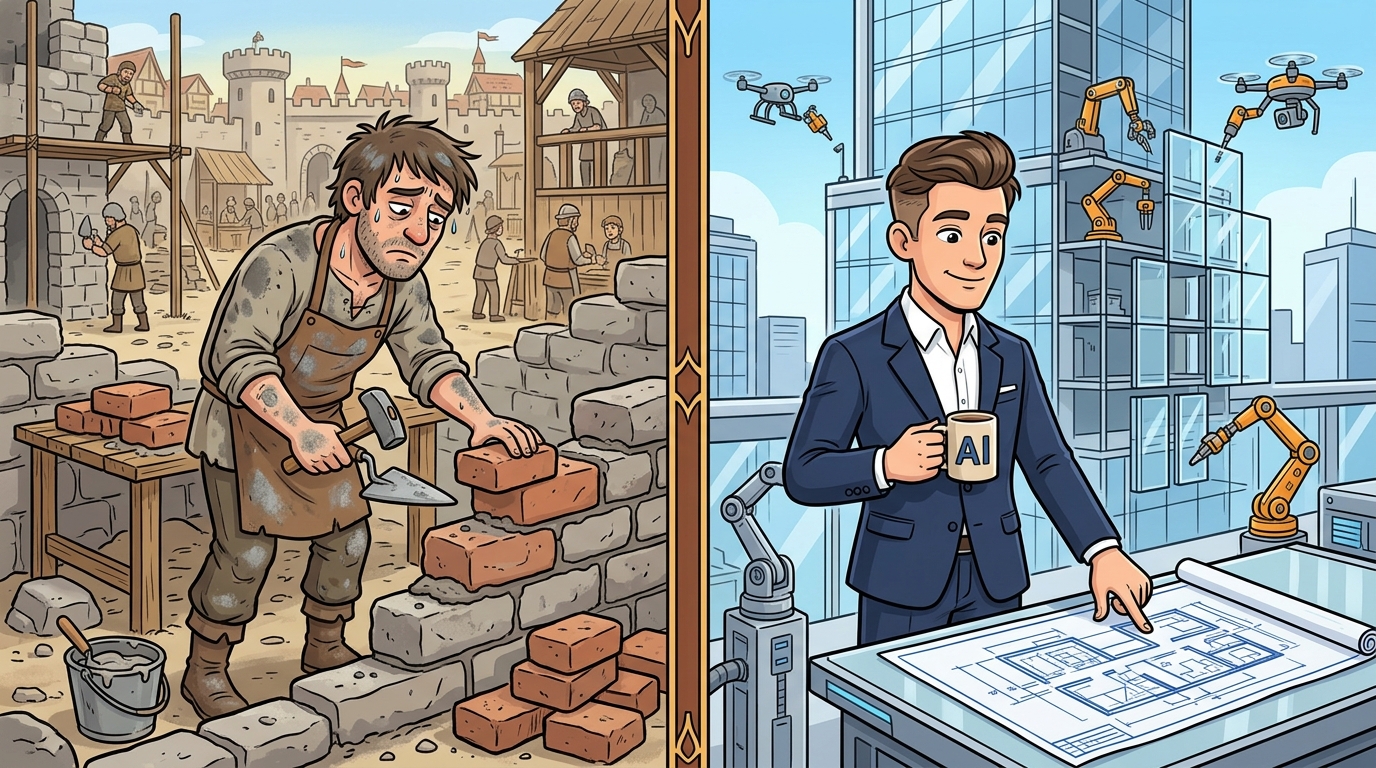

When building AI products, teams often make one of two mistakes. The first is overengineering: building elaborate multi-agent systems with orchestration layers, vector databases, and autonomous decision-making when a well-crafted prompt would solve the problem. The second is underengineering: wrapping an LLM call in a Flask endpoint and calling it production-ready, only to discover scale problems, hallucination risks, and zero observability when things go wrong.

This section establishes a framework for thinking about AI product architectures. You will learn to map requirements to architectural complexity, apply YAGNI (You Are Not Going to Need It) principles to AI systems, and understand the spectrum from simple LLM wrappers to complex multi-agent architectures.

The Architecture Spectrum

AI product architectures fall along a spectrum from trivial to complex. Understanding this spectrum helps you choose the right starting point and know when to evolve.

Level 1: Simple LLM Wrapper

The simplest architecture wraps an LLM API call in a web service. The user sends a prompt, the service forwards it to the LLM, and returns the response. This pattern suits internal tools, prototypes, and low-stakes applications where errors have no significant consequences.

Low Complexity Ideal for: FAQ bots, internal chatbots, prototyping experiments

Level 2: Prompted LLM with Tools

This architecture adds tool use capabilities to the LLM. The model can call external APIs, search the web, run code, or access databases. GitHub Copilot exemplifies this pattern: the IDE provides context, the model suggests completions, and the human accepts or rejects. The architecture maintains human oversight through suggestion-and-approval flows.

Low-Medium Complexity Ideal for: Coding assistants, document processing, customer service augmentation

Level 3: Retrieval-Augmented Generation (RAG)

RAG architectures add a retrieval layer that provides context to the LLM. When a user asks a question, the system retrieves relevant documents from a knowledge base, augments the prompt with this context, and generates an answer grounded in retrieved information. This pattern dramatically reduces hallucination risk and enables the system to answer questions about proprietary information.

Medium Complexity Ideal for: Customer support, legal research, medical information systems, enterprise knowledge management

Level 4: Agentic Multi-Agent Systems

At the most complex end of the spectrum, agents can plan, use tools, delegate subtasks to other agents, and execute multi-step workflows with minimal human intervention. These systems require sophisticated orchestration, robust error handling, and careful design to ensure alignment and safety.

High Complexity Ideal for: Autonomous research, complex document processing, end-to-end workflow automation

Key Insight: Start Simple, Evolve as Needed

The most successful AI products start at the simplest level that meets requirements and evolve when actual usage reveals the need for additional complexity. Premature optimization, especially in AI systems, wastes development time and adds maintenance burden without proportionate benefit.

YAGNI Applied to AI Architecture

YAGNI (You Are Not Going to Need It) originated in extreme programming, but it applies with special force to AI systems. Unlike traditional software where you can anticipate scale requirements, AI products often discover their actual needs only after deployment. User behavior, query patterns, and failure modes are difficult to predict without production data.

The YAGNI AI Decision Tree

Before adding architectural complexity, ask these questions:

When YAGNI Leads to Overengineering

Common overengineering patterns in AI products include building multi-agent systems for single-step tasks, implementing vector databases when simple keyword search would suffice, adding complex memory systems when stateless interactions are appropriate, and designing for millions of users when the product has hundreds of active users.

When YAGNI Leads to Underengineering

Underengineering manifests as skipping observability because the prototype works fine in development, ignoring latency optimization because response times seem acceptable, omitting guardrails because the test cases pass, and deferring security review because access is limited to internal users. These shortcuts compound into production incidents.

The Production Maturity Line

Regardless of architectural complexity, every production AI product needs three things: observability (you can see what is happening), evaluability (you can measure quality), and recoverability (you can fix problems). These non-functional requirements apply to a one-prompt prototype and a hundred-agent system alike.

The "start simple" advice is sometimes misinterpreted as "simple architectures are always better." But starting too simple can be underengineering. A simple LLM wrapper may be inappropriate for a high-stakes medical application, even though it is cheaper and faster to build. The right architecture matches your requirements for accuracy, safety, and reliability. YAGNI applies to speculative future scale, not to known present risks.

Choosing the Right Architecture

Architecture selection depends on multiple factors working in concert. When evaluating latency budgets, simple LLM wrappers can accept response times under two seconds, while tool-augmented systems and RAG architectures typically tolerate up to five seconds, particularly when retrieval operations are involved. Agentic multi-agent systems operate on a fundamentally different time scale where minutes may be acceptable since complex reasoning and multi-step execution naturally require more time.

Hallucination tolerance varies significantly across architectures and use cases. Simple LLM wrappers present the highest risk when the application involves high-stakes decisions, requiring careful prompt engineering and evaluation. Tool-augmented systems offer medium-low hallucination tolerance since external tool calls provide verification mechanisms. RAG architectures can achieve very low hallucination rates by grounding responses in retrieved documents. Agentic systems vary widely depending on the specific task and the guardrails implemented.

Domain knowledge requirements create a clear differentiation point. Simple LLM architectures work well when general knowledge suffices. Tool-augmented systems extend this to combine general knowledge with tool-accessed information. RAG architectures excel when proprietary documents and domain-specific knowledge bases are central to the use case. Agentic systems span the full range depending on their intended purpose.

Human oversight requirements scale with autonomy and complexity. Simple LLM wrappers typically require minimal or no human oversight. Tool-augmented systems benefit from approval flows where the human reviews and validates suggestions before actions are taken. RAG systems work best with output review mechanisms. Agentic architectures need escalation paths that allow human intervention when the system encounters situations beyond its competence or requiring judgment.

Operational complexity increases substantially across the spectrum. Simple LLM wrappers maintain low operational overhead. Tool-augmented systems introduce medium complexity due to tool integration and state management. RAG architectures demand medium-high operational investment for index management, embedding pipelines, and retrieval optimization. Agentic multi-agent systems carry the highest operational burden, requiring sophisticated orchestration, error handling, and monitoring infrastructure.

Architectural Evolution Patterns

As AI products mature, architectures typically evolve along predictable paths. Understanding these evolution patterns helps you plan ahead without overbuilding.

Common Evolution Paths

Path A: Simple to RAG. A customer service chatbot starts with general LLM responses. After launch, users ask about order status and refund policies. The team adds a RAG system for policy documents and integrates with the order database through tools.

Path B: RAG to Agentic. A legal research assistant begins as a document retrieval system. Users begin asking complex questions requiring multi-step research across multiple databases. The team adds agentic capabilities for autonomous research flows.

Path C: Single to Multi-Agent. A coding assistant starts with one agent reviewing code. As the product grows, separate agents handle security scanning, performance analysis, and documentation generation, coordinated by an orchestration layer.

Who: A healthcare analytics startup building an AI assistant for hospital administrators

Situation: The team prototyped a simple LLM wrapper that answered questions about hospital metrics using general medical knowledge

Problem: In beta testing, administrators needed answers about their specific patients and operational data, not general medical information

Dilemma: Adding patient data raised HIPAA compliance concerns, but administrators needed those capabilities to do their jobs

Decision: They evolved to a RAG architecture with strict access controls, retrieving only de-identified aggregate data, and added human-in-the-loop approval for any query touching individual patient records

How: The system now has three tiers: public FAQ (simple LLM), aggregate reports (RAG with hospital data), individual records (human-in-the-loop approval required)

Result: 40% reduction in time for administrators to generate operational reports, with full audit trails for compliance

Lesson: Architecture evolution should be driven by actual user needs, not anticipated ones. Start simple, measure usage, and evolve based on real gaps.

Section Summary

AI product architectures exist on a spectrum from simple LLM wrappers to complex multi-agent systems. The right architecture depends on your requirements for latency, accuracy, cost, and oversight. YAGNI principles apply strongly to AI systems because actual needs are often revealed only in production. Every production AI product needs observability, evaluability, and recoverability regardless of complexity level.