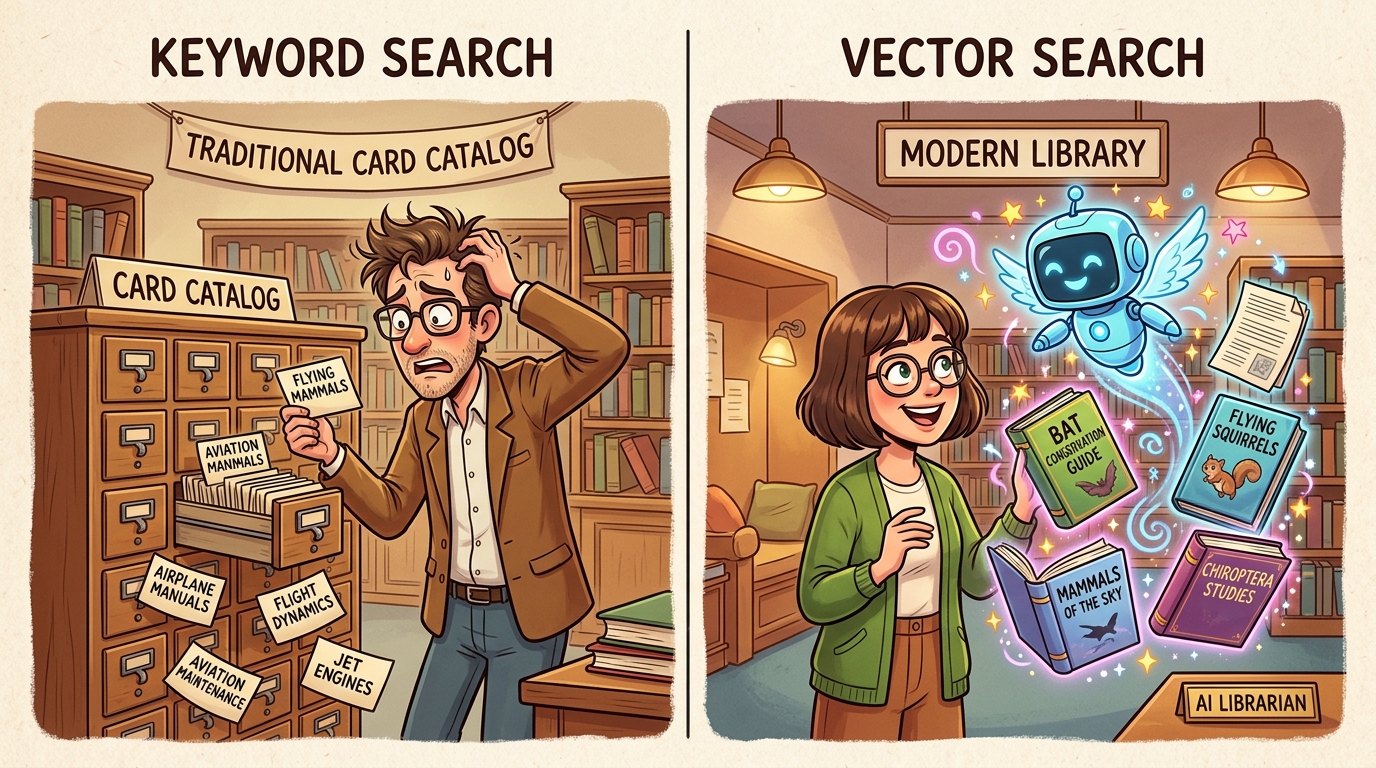

Building retrieval systems requires all three disciplines working together: AI PM defines what knowledge the product needs access to and how relevance should be measured; Vibe-Coding experiments with different retrieval strategies, chunking approaches, and ranking algorithms to find what works; AI Engineering implements the retrieval pipeline, vector database, and caching that make retrieval fast and reliable.

Use vibe coding to probe retrieval behavior before investing in production RAG systems. Quickly test different embedding models, chunking strategies, and query formulations against your actual data. Vibe coding retrieval variants lets you discover failure modes like irrelevant context, missing top results, and chunk boundary issues before they affect users, reducing the time spent debugging retrieval in production.

PMs must decide: What is the acceptable staleness of retrieved content? How do retrieval failures map to user-facing failures? When should the system say "I do not know" versus guessing? These decisions directly affect product reliability perceptions. PMs should define explicit data freshness requirements, establish escalation paths when retrieval fails, and determine which high-stakes domains require human verification rather than AI retrieval. Retrieval quality often matters more than model quality for user trust.

Objective: Master retrieval architectures from basic RAG to advanced knowledge systems.

Chapter Overview

This chapter covers the engineering decisions that determine how models are selected, routed, and allocated to tasks. Model selection involves understanding open versus closed models, size versus capability trade-offs, and task-model matching. Model routers direct requests to appropriate models based on task requirements, cost constraints, and quality targets. Ensembles and specialization combine multiple models for better results than any single model. Structured outputs and tool compatibility enable reliable integration with external systems. The chapter concludes with latency, cost, and quality trade-offs that guide optimization priorities.

Four Questions This Chapter Answers

- What are we trying to learn? How to build retrieval systems that consistently provide the right context to AI models at the right time.

- What is the fastest prototype that could teach it? A simple RAG prototype with your actual data that reveals whether retrieval quality is the bottleneck in your AI pipeline.

- What would count as success or failure? Retrieval systems where garbage-in-garbage-out failures are caught and measured, not hidden downstream in model behavior.

- What engineering consequence follows from the result? Retrieval quality often matters more than model quality; investment in retrieval infrastructure typically has higher ROI than model upgrades.

Learning Objectives

- Design effective RAG systems grounded in actual data quality

- Choose vector databases and embedding models appropriately

- Optimize chunking strategies for your specific document types

- Implement advanced retrieval patterns including reranking and hybrid search

- Evaluate retrieval quality with systematic measurement frameworks

- Recognize when RAG is the wrong choice and apply alternatives